Welcome to issue #1 of the Openhouse substack!

Our goal today is to launch a proof-of-concept app for real-time audio rooms. You can find a live version of the app at: https://openhouse-app.herokuapp.com

If you want to dive into code, the entire Openhouse repository is available on Github:

In the coming weeks, we’ll take a deeper dive into the technologies that underpin this demo, and build out a more complete feature set.

Disclaimer

Before getting into it, I want to take this opportunity to dissolve any assumptions that I’m an expert in this field. While I do have a background in software, I have zero experience dealing with audio/video (A/V) networking and its associated challenges. My prior work has largely been in payments and authentication. When I began looking into the world of A/V apps, I immediately began to recognize the familiar signs of a field I knew I would find interesting:

An ocean of acronyms

Endless rabbit holes

Clear optimization goals

Protocols on protocols on protocols

I’ll do my best to simplify the ideas we come across, but I cannot make any guarantees that everything will make sense. If you think I’ve explained anything incorrectly, please let me know! My Twitter DMs are open, and I’ll generally respond within 24h.

So where do we start?

The answer, my friend, is WebRTC.

WebRTC stands for Web Real-Time Communication. In simple terms, it’s a system that enables web apps to record A/V data from a user’s device and stream it to others.

The history of WebRTC is shrouded in corporate acquisitions, competing standards, and long lost technical discussions. It’s taken years for the world’s most popular web browsers to roll out support for the technology, and this is still an ongoing effort today. Despite this, WebRTC is widely adopted and used in many of the world’s largest communication apps such as Google Meet, Facebook Messenger, and Discord.

Increasingly, developers are turning to WebRTC to build real-time communication apps. Why? Primarily because WebRTC is native to the browser. It’s hard to overstate the importance of this point. If you want to build a web app with realtime A/V communication, WebRTC is the only way to do so without asking users to download a plug-in like Flash.

The promise of WebRTC is that a developer can write a couple dozen lines of Javascript and have a real-time A/V app running and compatible with any browser. In reality, WebRTC is an umbrella term for a collection of standards, protocols, and APIs. Its implementation is not uniform across browsers. And there’s no one right way to use it. We’ll take a closer look at WebRTC in future issues, but for now let’s keep things simple.

Computer networking and WebRTC

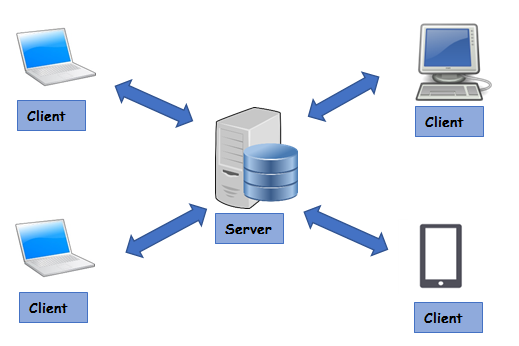

To get started with WebRTC, we’ll leverage a library called PeerJS which provides a simplified interface to the features we’ll need. PeerJS gets its name from peer-to-peer (P2P) networking. P2P sits in contrast to the more common client-server architecture that powers the vast majority of web services today. Here’s an illustration of a traditional app with a centralized server at the hub:

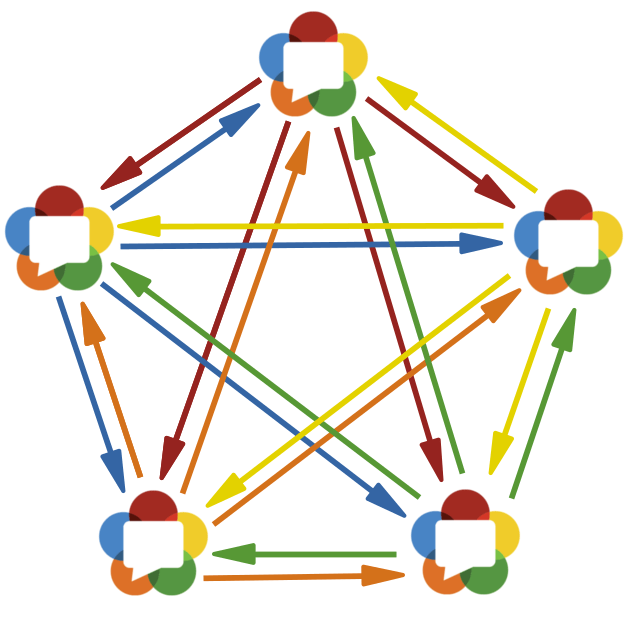

In contrast to this, we’re going to use P2P networking. This mean users won’t send A/V data through a centralized hub. Central servers add latency. And latency hurts the real-time experience. Instead, each “peer” will have a direct network connection to every other peer it’s in conversation with. If there are N users in a room, each user will have N-1 uplink/downlink connections to the other users in the room.

It’s important to understand the trade-offs inherent to this design decision. Every application has some features it needs to optimize at the expense of others. For example in payments, it pays to be correct. If a bad deployment causes you to charge a customer the wrong amount or deliver funds in the wrong currency, these bugs can have disastrous consequences on your business and reputation. In payments, we typically achieve correctness by giving up something like latency. Thus our payment systems are slow, but correct.

Real-time A/V communication is exactly the opposite. Our brains are incredibly good at filling the gaps of reality. If a video stream drops a frame or an audio stream cuts out briefly, our brains can usually make sense of the information available and continue without even noticing something has happened. However if you consistently delay a stream of A/V data by a second or two, it can create a terribly frustrating experience for everyone involved.

In real-time A/V apps, we generally optimize for lower latency.

By using a P2P network, we can minimize latency at the expense increased bandwidth. In a room of 10 people, each person will have 9 two-way connections. For a device with poor network connectivity, this can be an awful lot to ask for. As you can imagine, this choice will present scaling challenges, and I anticipate we will run into these problems in the future.

There’s one last design point I want to callout before diving into the code. Despite using a P2P network, we will still use a central signaling server to help peers find one another. The signaling server will not process any audio data itself. Instead, it will broker connections and allow users to signal to others when they wish to join a room. The signaling server is how peers will share their IP addresses with one another, allowing them to create a direct connection.

Okay, enough product talk! Let’s get building!

Into the code

Note

We have a rather diverse audience reading this substack. If you’re not interested in the code, you can safely ignore this section. Each issue will typically present “product talk” in text above and walk through “engineering talk” in a video below.

Wrapping up

Now we have a proof-of-concept running that supports audio rooms for real-time communication! It doesn’t look like much right now. The UX is horrible and in need of many community features. But this is a good start! Next week, we’ll take a look at improving the UX and learning a little more about WebRTC.

Getting involved

We’re looking for any and all contributors to help chart Openhouse’s future and make it a reality. If you’re interested in joining our journey, you can reach me on Twitter.

And if you have friends that would be interested in this project, let them know by sharing this post!